Operations / Data

WST Operations

The WST Operations Work Package is dedicated to designing the science-driven operational framework and data flow architecture that will enable WST to deliver its ambitious scientific goals.

From survey design and scheduling to data reduction, analysis, and long-term archiving, Operations defines how WST will function on a daily and strategic level. The primary goal is to ensure a sustainable, flexible system capable of running multiple surveys in parallel while consistently producing high-quality, science-ready data products.

The Facility Simulator

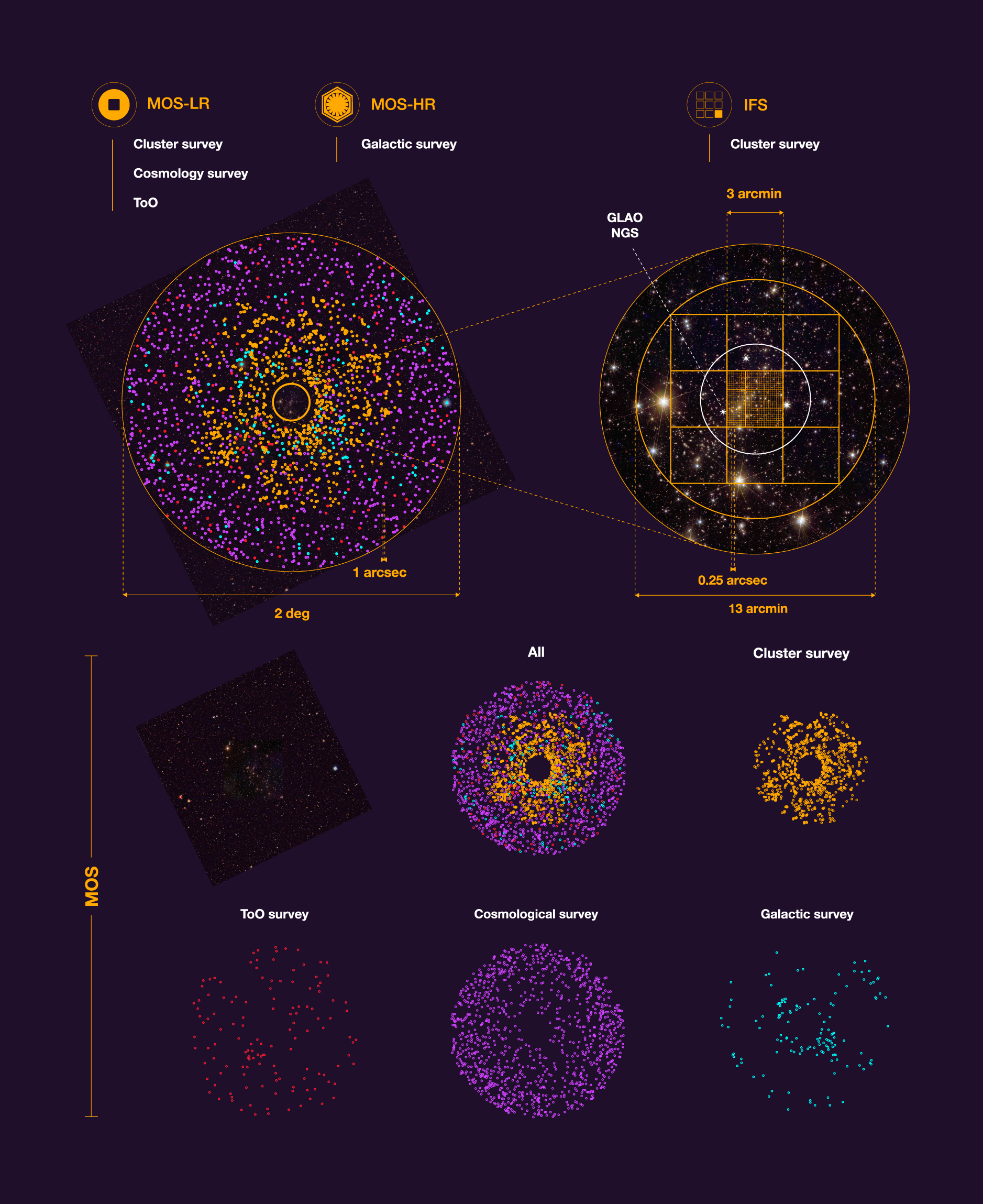

A cornerstone of the Operations Work Package is the Facility Simulator—a prototype survey planner and target allocation tool developed in collaboration with the Survey Planning team.

The Simulator will optimize observing efficiency across multiple simultaneous surveys—both multi-object spectroscopy (MOS) and integral-field spectroscopy (IFS)—while maintaining the agility to incorporate Targets of Opportunity and time-domain programs. This balance between efficiency and adaptability is essential to fulfilling WST’s scientific mission.

Data Reduction and Analysis Pipelines (both MOS and IFS)

The WST will operate on a scale far beyond current spectroscopic facilities. While existing data processing frameworks will be carefully assessed, new pipelines will be developed to meet WST’s unique requirements.

Beyond traditional reduction workflows, Operations will explore advanced data analysis methods, including machine learning and data mining, to fully exploit the unprecedented volume of data. Efforts will be done to provide a flexible architecture for science users to plug-in specialized analysis tools.

Sustainability is a key consideration. The outdated assumption that “computing is cheap” no longer holds. Power consumption, data transfer, and hardware costs must be carefully optimized. We will evaluate trade-offs between on-site processing, remote data centers, and near-archive reduction, ensuring efficiency while minimizing environmental impact. Meanwhile, advances in artificial intelligence promise to revolutionize operations—from automated data reduction and analysis to adaptive scheduling—enhancing both capability and efficiency.

Archive and Data Management

The WST Archive and Data Management System will store millions of spectra and data cubes in an accessible, sustainable, and standards-compliant way.

Comprehensive metadata tracking and quality control will be embedded throughout the data flow, ensuring alignment with FAIR principles and Virtual Observatory standards. Handling such immense data volumes—augmented by telescope telemetry, monitoring data, and real-time alerts—represents a significant challenge, demanding innovative design and robust scalability.

Science Operations Concept

The Science Operational Concept provides the overarching framework that integrates MOS and IFS observing modes, despite their differing requirements for exposure cycles and calibration.

Designed with time-domain science in mind, the concept ensures efficient, dynamic operations that can respond to variable and transient phenomena. The Science Data Flow is central to this effort, enabling raw data to be transferred, processed, and quality-checked in near real time—an essential capability for time-critical observations and automated alerting.

Towards a Transformational Era

The WST will generate data volumes unprecedented at ESO, marking a major step into the era of astronomical big data. By learning from current survey facilities, critically assessing existing methods, and developing innovative new approaches, the Operations Work Package will define a robust, future-ready operational model.

Our mission is to transform raw photons into science-ready data products that will deliver an enduring spectroscopic legacy for the global astronomical community.

Next section

the WST needs to be placed in an adequate site

Excellent seeing, clear nights, and low light pollution. Selecting such a site is part of a comprehensive process that involves the investigation and simulation of multiple aspects.

The WST operations

Issue #2 The WST Chronicle